4 Things Industry 4.0 03/02/2026

Presented by

Happy Monday, and welcome to meteorological spring.

Yes, that's a real thing. While calendar spring doesn't show up until March 20th, meteorologists decided March 1st is close enough. You know who else likes to round up on timelines? Every vendor who's told you their IIoT platform will be "production-ready by Q1."

And speaking of uptime promises — did anyone else try to use Claude this morning and get the digital equivalent of a "gone fishing" sign? Somewhere out there, a vibe coder was just staring at a blank terminal for three hours wondering how people used to write code by hand.

Anyway — this week's newsletter has a theme: the tools you choose matter more than the tools you hype.

We've got an acquisition that could reshape how open source plays at the industrial edge. A philosophy from ARC Forum 2026 that might save you from ever ripping out legacy hardware again. A robot vacuum that exposed a security flaw so fundamental it should make every IIoT engineer wince. And a developer debate that's asking whether the hottest AI protocol in manufacturing is already being replaced by something your team has used for decades.

Four stories. All different. All pointing in the same direction: the smartest move in Industry 4.0 isn't always the newest one — sometimes it's the simplest one done right.

Here's what caught our attention:

SUSE Acquires Losant — And Open Source Just Got a Seat on the Factory Floor

SUSE — the company best known for enterprise Linux and Kubernetes — just bought Losant, a low-code Industrial IoT platform out of Cincinnati. And if you're not paying attention to this deal, you should be. It might be the most consequential IIoT acquisition of 2026 so far.

The details:

Losant is a Cincinnati-based company founded in 2015 that provides a low-code IIoT platform featuring a visual workflow engine and customizable dashboards to help enterprises convert complex device data into operational value. FinSMEs Think drag-and-drop logic for your sensor data — no writing Python scripts at 2am to get a temperature alert working.

The platform includes built-in industrial connectors for protocols like Modbus, OPC UA, BACnet, and SNMP Losant, which means it already speaks the languages your PLCs and building controllers use. It handles everything from device management to edge compute to data visualization — and it was recognized in Gartner's 2025 Magic Quadrant for Global Industrial IoT Platforms. SDxCentral

Now here's where it gets interesting. SUSE says this acquisition completes its "Edge vision" by extending from the Near and Far Edge directly to the Tiny Edge SUSE — those sensors and constrained devices that are too small to run a full Linux stack with Kubernetes.

As Keith Basil, VP and GM of SUSE's Edge Business Unit, explained: early on, they thought getting device connectivity plumbed into Kubernetes was the win. But it wasn't enough. SDxCentral Customers didn't just need infrastructure — they needed operational awareness. What are the actuators doing? What are the sensors sensing? What are the cameras seeing?

That's the gap Losant fills.

Why it matters for manufacturing:

Here's the thing about most IIoT platforms on the market: they're proprietary, they're expensive, and switching costs are brutal. You get locked into one vendor's ecosystem, and three years later you're paying licensing fees that make your CFO cry.

SUSE is taking a different approach. The company plans to open source Losant's technology and work with open source communities on interface standardization, interoperability, and process automation. IT Brief UK That's not just corporate lip service — SUSE has over 30 years of track record actually doing open source at the enterprise level.

And there's one more detail buried in the SDxCentral interview that caught our eye: Losant has already stubbed out a Model Context Protocol (MCP) integration into the platform, enabling LLMs and AI agents to connect and communicate with industrial data. SDxCentral Basil said they're working to demo LLM integration with the industrial platform so you could query the state of your operations via plain text.

Imagine asking an AI agent: "What's the vibration trend on Compressor 3 over the last 48 hours?" and getting an answer pulled directly from your edge devices. That's where this is heading.

Real-world scenario:

You're a mid-size manufacturer running a mix of Allen-Bradley PLCs, some legacy Modbus sensors, and a handful of newer IoT devices. Right now, getting all of that data into one dashboard probably involves three different vendor platforms, two integrators, and a spreadsheet someone named "MASTER_v3_FINAL_REAL.xlsx."

With SUSE's combined stack, you'd get SUSE Linux Micro and K3s handling infrastructure at the edge, Losant's visual workflow engine handling the application logic and dashboards, and built-in industrial protocol support connecting everything — all on open standards, with the option to self-host or run as SaaS.

SUSE expects the integration with their edge portfolio to be completed by April. SDxCentral

The bottom line: When an enterprise open source heavyweight acquires a Gartner-recognized IIoT platform and promises to open source the whole thing, that's not just an acquisition — it's a bet that open beats proprietary at the industrial edge. Keep your eye on this one.

SDxCentral's deep-dive interview with Keith Basil →

"MCP Is Dead. Long Live the CLI." — Or Is It?

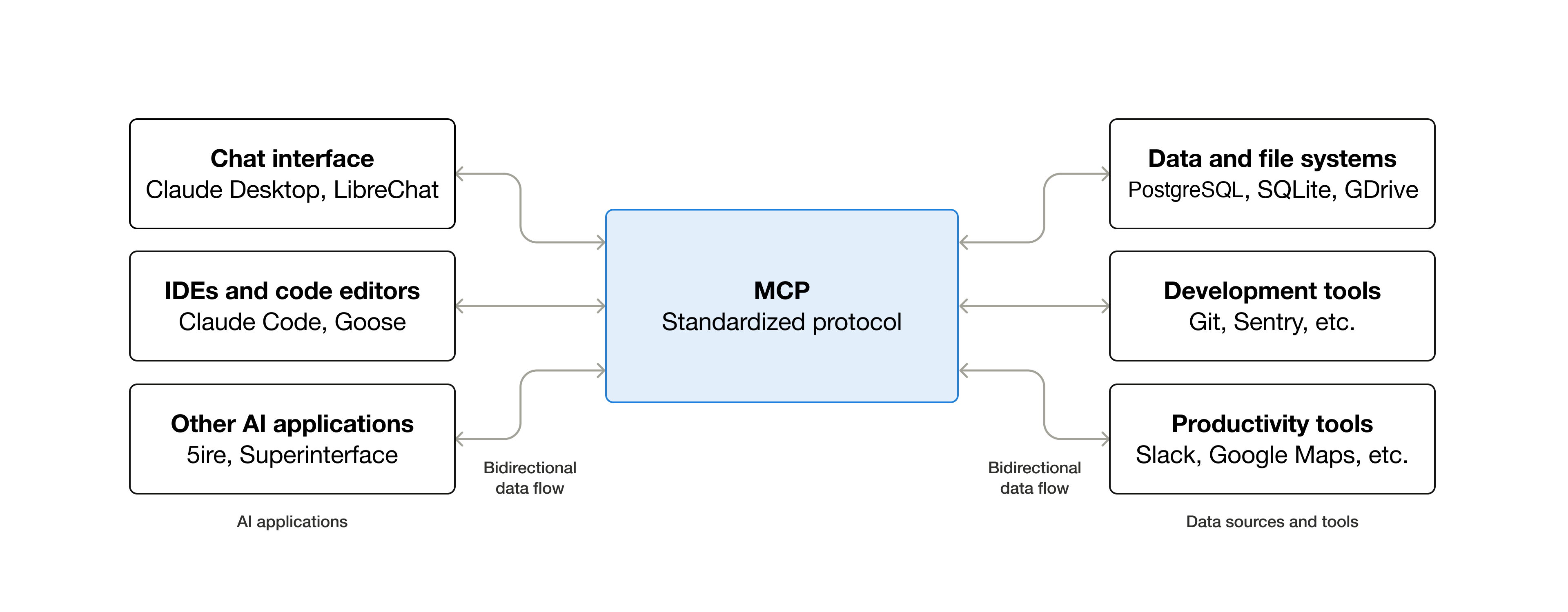

Remember last month when we told you MCP (Model Context Protocol) was becoming the USB-C of AI infrastructure? That it was the foundation everything would be built on?

Well. A developer named Eric Holmes just published a blog post titled "MCP is Dead. Long Live the CLI" — arguing that MCP is already dying, and that the industry would be better off without it. Ejholmes The post hit the top of Hacker News, sparked a heated debate, and forced a question worth asking: did we all get a little too excited?

Let's break it down.

The case against MCP:

Holmes isn't some random troll. He's a developer who's actually shipped production systems using MCP, and his criticisms are specific:

MCP servers are processes — they need to start up, stay running, and not silently hang. CLI tools are just binaries on disk. No background processes, no state to manage, no initialization dance. Ejholmes

His biggest gripes:

- Initialization is flaky. He's lost count of the times he's restarted Claude Code because an MCP server didn't come up properly. Ejholmes

- Authentication is painful. Using multiple MCP tools means authenticating each one separately. CLIs with SSO or long-lived credentials just don't have this problem.

- Permissions are all-or-nothing. You can allowlist MCP tools by name, but you can't scope to read-only operations or restrict parameters. With CLIs, you can allow a "view" command but require approval for a "merge" command. Ejholmes

- Composability is limited. You can pipe CLI output through tools like jq, grep it, redirect to files. With MCP, you're stuck with whatever the server decided to return. Hacker News

His conclusion? If you're a company investing in an MCP server but you don't have an official CLI — stop and rethink. Ship a good API first, then ship a good CLI. Ejholmes

OK, but here's the other side:

Holmes makes valid points about developer workflow. If you're a software engineer running AI agents from a terminal, CLIs absolutely feel faster, simpler, and more debuggable. No argument there.

But here's where the manufacturing world diverges from the software development world — and why this debate isn't as clear-cut as the headline suggests.

CLIs assume a developer at a keyboard. Your maintenance supervisor isn't piping kubectl output through jq. Your plant manager isn't running shell scripts. The people who need AI-powered insights on the factory floor aren't command-line users — they're dashboard users, HMI users, mobile app users.

MCP wasn't designed just for developers in terminals. It was designed for applications that need to connect AI models to external tools and data — including web apps, mobile apps, and industrial platforms where there's no terminal in sight.

As several commenters in the Hacker News discussion pointed out, MCP shines when a human is using an AI assistant interactively — especially in IDE integrations, multi-AI environments, and organizations that want a common interface for AI tools. ModelsLab It struggles more in automated pipelines where reliability and throughput matter most.

And remember what we covered in Article 1? SUSE's Keith Basil specifically mentioned that Losant has already stubbed out MCP integration so that LLMs and AI agents can query industrial operational data via plain text. That's not a CLI use case. That's a platform integration use case — exactly where MCP was designed to work.

The nuanced take:

Here's what we think the real lesson is. It's not "MCP vs. CLI." It's "use the right tool for the job" — which, ironically, is exactly what we preach about technology choices on the factory floor.

| Use Case | Better Fit |

|---|---|

| Developer running AI agents in a terminal | CLI |

| Automated batch processing pipelines | CLI / Direct API |

| AI assistant in an IDE (Cursor, VS Code) | MCP |

| Industrial platform connecting AI to sensor data | MCP |

| Mobile app querying production data via natural language | MCP |

| Quick one-off scripts and data wrangling | CLI |

The pattern emerging is that MCP makes sense when there's a human in the loop using an AI assistant interactively, while direct APIs and CLIs win when you're building automated pipelines where reliability, debuggability, and throughput matter most. ModelsLab

What this means for your shop floor:

If you're evaluating AI agent architectures for manufacturing — and you should be — don't get caught up in the "MCP is dead" hot takes. Instead, ask these questions:

- Who's using the AI tool? If it's engineers in terminals, CLIs might be plenty. If it's operators, supervisors, or managers through an application, MCP is probably the right integration layer.

- What are you connecting to? If it's one data source and a simple query, you don't need a protocol. If it's five systems that all need to work together, MCP's standardized interface starts earning its keep.

- How important is auditability? MCP's structured tool calls are easier to log and trace than arbitrary shell commands — a real consideration when you're dealing with production systems.

The bottom line: MCP isn't dead. CLIs aren't obsolete. The developer who says "just use a CLI" is solving a different problem than the platform engineer who says "we need a standard integration protocol." Both are right — for their context. The mistake is treating a developer workflow debate as an architecture decision for industrial platforms.

Read "MCP is Dead. Long Live the CLI" →

Our February 3rd MCP deep-dive →

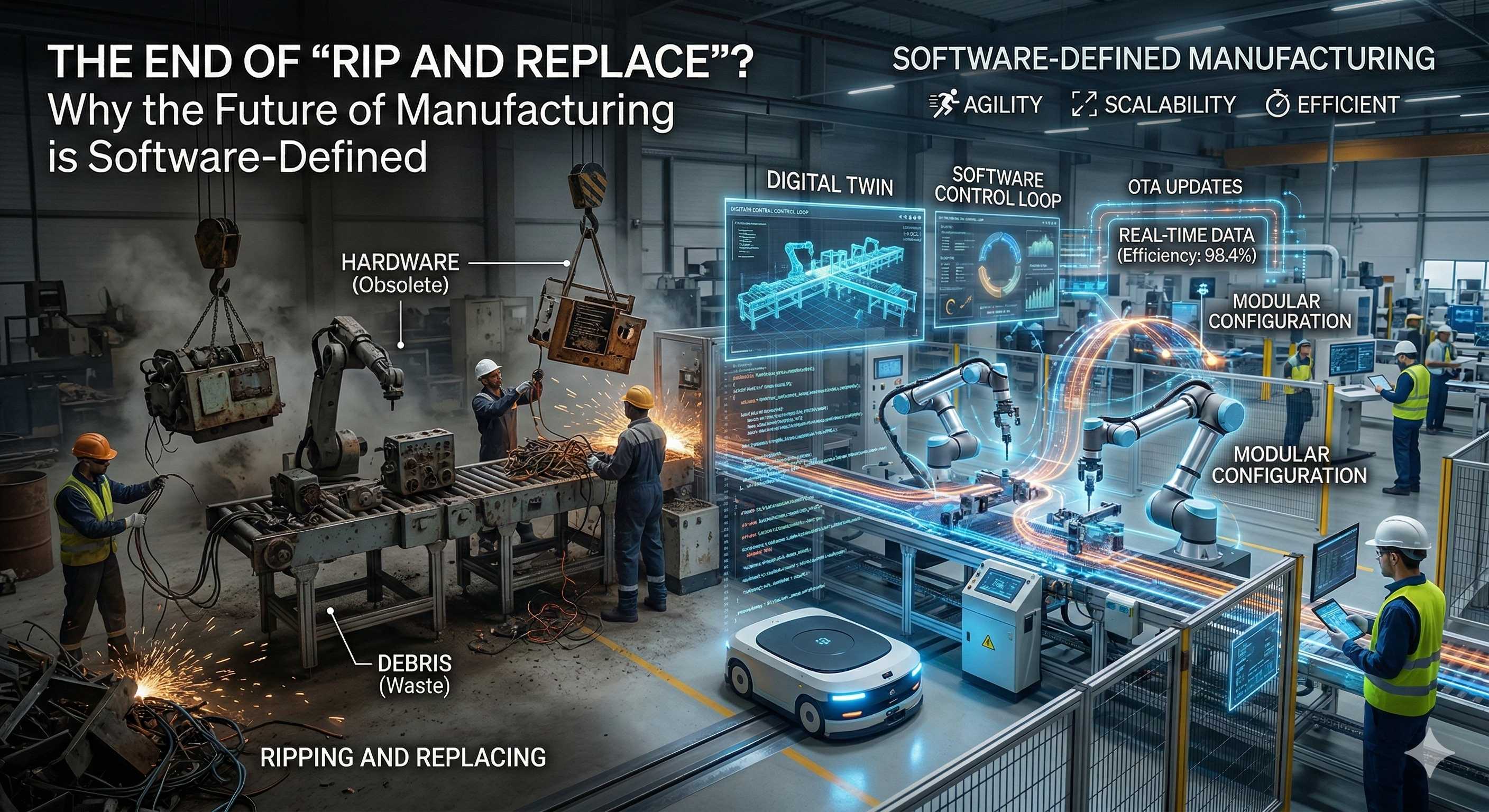

Software-Defined Automation — The End of "Rip and Replace"?

If you've ever stared at a perfectly functional PLC from 2008 and thought, "This thing still works great, but I can't run modern analytics on it without gutting the whole system" — this article is for you.

At ARC Forum 2026, ABB's Luis Duran laid out a vision for something called Software-Defined Automation (SDA) — and it might be the most important architectural concept in industrial automation right now.

So what is it?

Here's the idea in plain language: SDA is about decoupling control applications and operator screens from the specific, tightly coupled hardware they've traditionally been locked to. IIoT World Instead of your control logic being married to one vendor's proprietary controller, you separate the software layer from the hardware layer.

Think of it like your smartphone. You don't buy a new phone every time an app updates. The hardware and software evolve independently. SDA brings that same concept to the factory floor.

The architecture relies on three things: separating software from hardware, utilizing network-centric I/O, and implementing a solid information model that provides context to signals. IIoT World

Why this matters for your plant:

For decades, upgrading your automation capabilities meant the dreaded "rip and replace" — tearing out expensive legacy hardware just to access modern software features. New HMI? New controller. Better analytics? New platform. It's expensive, risky, and incredibly disruptive to production.

As ABB's Duran put it, the strategy must be "Innovation with Continuity" — bringing innovation to the user while preserving the investment they've already made, whether that's in applications they developed or hardware that still has useful life. IIoT World

The industry is moving toward a model where you can port applications from existing controllers to different platforms without touching the wires. IIoT World

Translation: Your control logic moves. Your wiring stays. Your budget thanks you.

This represents a shift from CAPEX-heavy "big bang" upgrades to an OPEX-friendly continuous improvement model. IIoT World Instead of saving up for a massive migration every 10-15 years, you modernize incrementally — swapping out software layers while your physical infrastructure keeps running.

Real-world scenario:

You're running a packaging line with a DCS that was installed in 2012. The hardware is rock solid. The I/O works fine. But you can't integrate AI-based quality inspection, you can't run edge analytics, and your engineering team — most of whom graduated after 2015 — finds the programming environment painful to work in.

With SDA, you'd keep the physical I/O and field wiring exactly as-is. You'd port your control applications to a general-purpose compute environment. You'd layer on modern analytics, AI, and visualization tools. And your newer engineers? They'd work in modern software design environments they actually understand.

That workforce piece is a bigger deal than people realize. The industry faces a significant challenge as experienced professionals retire, and new digital-native engineers require modern software design environments they can understand and work with comfortably. IIoT World

ABB is already building this. Their "Automation Extended" program, announced in February, introduces a separation-of-concerns architecture with two distinct layers: a software-defined control environment for deterministic process control, and a digital environment for advanced applications, edge intelligence, and real-time analytics. ABB New capabilities get deployed without touching mission-critical operations.

And they're not alone. Schneider Electric's Foxboro SDA takes a similar approach, building on the universalautomation.org framework and emphasizing open interoperability across a qualified ecosystem rather than confinement to a single vendor stack. ArcWeb

One thing to watch: Not all SDA implementations are created equal. As one analyst noted, some solutions are "software-defined, but not open" — effectively still proprietary ecosystems despite abstracting software from hardware. ArcWeb If your vendor's version of SDA only works with their hardware, that's not decoupling — that's just rebranding.

The bottom line: The rip-and-replace era isn't dead yet, but it's on life support. Software-Defined Automation gives manufacturers a way to modernize at their own pace, protect existing investments, and attract the next generation of engineers — all without shutting down a single production line. Ask your automation vendor where they stand on SDA. If they can't answer clearly, that tells you something.

Read the full IIoT World article →

ABB Automation Extended announcement →

ARC's deep-dive on Foxboro SDA →

A Word from This Week's Sponsor

Litmus — The Infrastructure Behind Industrial AI

Last week at ProveIt!, Litmus delivered one of the most compelling demonstrations of the entire event.

As a Title Sponsor, they didn’t just talk about AI.

They demonstrated how modern industrial infrastructure becomes the foundation upon which AI-native applications can actually run.

Industrial AI doesn’t fail because of models.

It fails because of infrastructure.

Disconnected PLCs.

Fragmented OT data.

Cloud-first architectures that ignore edge reality.

That’s the gap Litmus is built to solve.

Litmus Edge is a complete edge data platform designed to simplify OT-IT data pipelines and make industrial AI possible at scale.

With 250+ industrial connectors and no-code integration, Litmus enables manufacturers to:

• Connect and process real-time OT data from virtually any system

• Contextualize and normalize data at the edge — not in post-processing

• Deploy analytics and AI with low latency and high reliability

• Scale across sites without losing governance or control

This isn’t about sending more data to the cloud.

It’s about creating structured, contextualized intelligence at the edge — where operations actually happen.

What stood out at ProveIt! was how Litmus embeds AI inside context-aware industrial architecture.

From real-time data collection to centralized management to AI deployment, the platform is built for production environments — not lab demos.

And for engineers who want to get hands-on, the Litmus Edge Developer Edition provides full platform access with a resettable license. No watered-down trial. No artificial limits.

If your organization is serious about bridging OT and IT — and building infrastructure that AI can actually depend on — Litmus is a platform worth understanding.

Want to kick the tires on Developer Edition?

Link Here: https://litmus.io/litmus-edge-developer-edition

A Guy With a PlayStation Controller Just Exposed the Biggest Problem in IIoT Security

Here's a story that should keep every IIoT engineer up at night — and it starts with a robot vacuum and a PS5 controller.

AI strategy engineer Sammy Azdoufal bought a $2,000 DJI Romo robot vacuum and decided — just for fun — to try controlling it with his PlayStation 5 controller. Tech Times He used an AI coding assistant to reverse-engineer how the vacuum talked to DJI's cloud servers via a protocol called MQTT.

Here's what happened next: instead of just connecting to his own vacuum, roughly 7,000 Romo units across 24 countries started responding to him as their operator. DroneXL Live camera feeds. Microphone audio. Real-time floor plans of strangers' homes. All of it, wide open.

He didn't crack a password. He didn't exploit a zero-day. He used his own device token, and the server just... handed him everything.

So what went wrong?

This is where it gets educational — because the protocol at the center of this story, MQTT, is the same one running in thousands of factories right now.

MQTT (Message Queuing Telemetry Transport) is a lightweight messaging protocol designed for IoT devices. It works through a central server called a broker. Devices publish data to topics (like "sensor/temperature/line3"), and other devices or systems subscribe to those topics to receive the data.

In a properly secured system, the broker enforces Access Control Lists (ACLs) — rules that say "this device token can only see topics belonging to this device." Think of it like a hotel key card that only opens your room.

DJI's MQTT broker had no topic-level access controls. Any authenticated client could subscribe to wildcard topics and read traffic from every device on the network. DroneXL

It's like using your hotel room key to access every room in the building. ZME Science

But wait — wasn't the data encrypted?

Yes. DJI correctly had TLS encryption in place. And this is the part that trips people up.

TLS encryption only protects data in transit. It does nothing to prevent an authenticated user from seeing other people's data once connected. PBX Science The connection was secure. The authorization was broken.

This is a critical distinction that matters just as much on a factory floor as it does with robot vacuums:

- Authentication = "Are you who you say you are?" (DJI got this right)

- Authorization = "What are you allowed to see and do?" (DJI got this completely wrong)

- Encryption = "Can someone eavesdrop on the conversation?" (DJI got this right too)

Two out of three isn't enough. You need all three.

Why this matters for manufacturing:

Here's the thing — MQTT isn't just for robot vacuums. It's one of the most widely deployed protocols in industrial IoT. Your Kepware server might be publishing OPC UA data to an MQTT broker. Your edge gateway is probably using MQTT to push sensor data to the cloud. Your predictive maintenance platform almost certainly subscribes to MQTT topics.

Now ask yourself: does your MQTT broker enforce topic-level ACLs?

If a single authenticated device on your network can subscribe to a wildcard topic (#) and see data from every sensor, every PLC, every production line — you have the same vulnerability DJI had. Except your data isn't floor plans and battery levels. It's production recipes, batch parameters, equipment status, and operational intelligence your competitors would love to see.

Real-world scenario:

You deploy an MQTT broker (Mosquitto, HiveMQ, EMQX — pick one) to collect sensor data from three production lines. Line 1 publishes to plant/line1/#, Line 2 to plant/line2/#, and so on. A new contractor brings a device onto the network, authenticates to the broker, subscribes to # (the wildcard for "everything"), and suddenly has real-time visibility into every topic on every line.

No hacking required. Just a missing ACL configuration.

What to do right now:

- Audit your MQTT broker ACLs. If you're running Mosquitto, check your

acl_fileconfiguration. If you don't have one, that's your answer. - Enforce topic-level permissions. Every client should only access the topics it needs. No wildcard subscriptions without explicit authorization.

- Don't confuse encryption with authorization. TLS is necessary but not sufficient. Encrypted traffic that anyone can read isn't secure — it's just encrypted.

- Test it. Connect a test client to your broker and try subscribing to

#. If you see data from devices that aren't yours, you have work to do.

Security researcher Kevin Finisterre noted that a strikingly similar category of failure — broken server-side access controls — occurred with DJI back in 2017. PBX Science Nearly a decade later, same company, same problem. Don't let that be your plant.

The bottom line: A guy with a PlayStation controller and an AI coding assistant accidentally became a spy in 7,000 homes. The vulnerability? A missing access control list on an MQTT broker. If that sentence doesn't make you immediately want to check your own MQTT configuration, read it again.

Read the full breakdown (DroneXL) →

HiveMQ MQTT security best practices →

Byte-Sized Brilliance

The Protocol Securing Your Robot Vacuum Was Built for an Oil Pipeline in 1999

That MQTT vulnerability we just covered in Article 3? Here's some context that makes it even more ironic.

MQTT — the protocol DJI used (poorly) to connect 7,000 robot vacuums — wasn't designed for smart homes. It was invented in 1999 by Andy Stanford-Clark of IBM and Arlen Nipper of Cirrus Link Solutions (yes, that Arlen Nipper — the same one who just received a lifetime achievement award at ProveIt! 2026) to monitor oil pipelines via satellite in the desert.

The constraints were brutal: expensive satellite bandwidth, unreliable connections, remote devices that couldn't be physically accessed for troubleshooting. So they built the lightest, most efficient publish/subscribe messaging protocol they could — one that could push data reliably over connections as thin as a straw.

Today, MQTT handles an estimated 5+ billion messages per day across industrial and consumer IoT deployments worldwide. It's in everything — factory floor sensors, connected vehicles, smart buildings, medical devices, agricultural monitors, and yes, robot vacuums that accidentally expose their camera feeds to strangers.

For perspective: when Stanford-Clark and Nipper wrote the first MQTT spec, Google was a one-year-old startup running out of a garage, Wi-Fi had just been branded (it was called IEEE 802.11b before that), and the total number of internet-connected devices on Earth was roughly 300 million. Today, there are over 17 billion IoT devices — and MQTT is the backbone of a huge percentage of them.

The irony? A protocol purpose-built for one of the harshest, most security-conscious environments in industrial operations — remote oil infrastructure — got deployed on consumer devices with zero access controls. Nipper and Stanford-Clark designed MQTT to work reliably in environments where failure meant losing contact with equipment hundreds of miles from the nearest technician. DJI deployed it without bothering to check who was subscribing to what.

The protocol wasn't the problem. The implementation was. That's a lesson that applies to just about every technology we covered this week — from Software-Defined Automation to MCP to open-source IIoT platforms. The tool is only as good as the team configuring it.

|

|

|

|

|

|

Responses