4 Things Industry 4.0 02/09/2026

Presented by

Happy February 9th, Industry 4.0!

Valentine's Day is this Saturday, and if you're still scrambling for a gift — might we suggest a digital twin? It's always available, never argues about thermostat settings, and according to Siemens and NVIDIA, it can now actually think for itself.

Okay, maybe stick with flowers. But the point stands: AI is getting more intimate with manufacturing than ever before.

Last week, Anthropic — the company behind Claude — dropped 11 open-source plugins for its Cowork platform. No press conference. No launch event. Just a GitHub repo and a blog post. And suddenly, SaaS stocks from Salesforce to Thomson Reuters were in free fall. Investors coined a new term for it: the "SaaSpocalypse." $285 billion in market value — gone in a day.

Meanwhile, the Physical AI race is heating up fast. Vention just raised $110 million to make factory automation work on the first try (yes, really), and Siemens and NVIDIA announced they're building an "Industrial AI Operating System" — turning digital twins from pretty 3D models into the brains that actually run your factory.

And if you've been skeptical about cloud-only AI for your plant floor? You're not alone. The on-premise AI movement is picking up serious momentum, and for good reason.

The theme this week? AI isn't just getting smarter — it's getting closer. Closer to your production lines, closer to your workflows, and closer to replacing software you're currently paying a fortune for.

Here's what caught our attention:

Siemens and NVIDIA Are Building an Operating System for Your Factory

If you've been hearing "digital twin" for years and thinking "cool 3D model that nobody actually uses" — you're not wrong. And Siemens and NVIDIA are betting big that they can change that.

At CES 2026, the two companies announced they're co-developing an Industrial AI Operating System — a platform designed to span the entire manufacturing lifecycle, from product design to factory operations to supply chain management. It's a massive vision. It's also the kind of announcement that sounds incredible on stage and gets a lot harder once it hits the shop floor.

What they're promising:

Siemens is combining its Xcelerator platform (the industrial software suite behind Teamcenter, Opcenter, and others) with NVIDIA's Omniverse simulation engine and AI infrastructure. The pitch? Turn digital twins from passive 3D mirrors into active intelligence that predicts, optimizes, and adapts in real time.

Here's the highlight reel:

- 9 new AI copilots embedded across Teamcenter, Polarion, and Opcenter

- Digital Twin Composer launching mid-2026 on the Xcelerator Marketplace

- A fully AI-driven adaptive manufacturing site planned for Siemens' electronics factory in Erlangen, Germany — this year

- NVIDIA providing the AI compute backbone; Siemens committing hundreds of industrial AI engineers

The early results — if you trust them:

PepsiCo is an early adopter, and the reported numbers are eye-catching: 90% early problem detection, 20% throughput increase, and 10-15% reduction in capital expenditure. Impressive — but worth noting these come from a keynote stage, not a peer-reviewed case study. We'd love to see those numbers after 12 months of full-scale deployment, not just a pilot showcase.

Where the skepticism kicks in:

Let's be real about a few things.

First, "Industrial AI Operating System" is doing a lot of heavy lifting as a phrase. What does that actually mean for a plant running 15-year-old PLCs and a patchwork of historians, SCADA systems, and Excel spreadsheets? Siemens and NVIDIA are essentially saying the future of manufacturing runs on their shared platform — but most factories aren't starting from a clean Siemens stack. They're starting from a mess.

Second, the Erlangen factory they're showcasing? That's a Siemens electronics factory — one of the most digitally mature manufacturing environments on the planet. Proving this works there is like Tesla proving self-driving works on a closed test track. The real question is whether it works at a mid-market food & beverage plant with three different SCADA vendors and an OT team of two.

Third, we've seen big partnership announcements before. Remember when every major vendor was going to "revolutionize manufacturing with the cloud"? The technology was real, but the gap between the press release and the plant floor took years to close — and in many cases, it still hasn't.

Why it still matters:

All that said, this partnership is significant. Siemens is the largest industrial software company on the planet. NVIDIA is the AI infrastructure backbone. The fact that they're investing hundreds of engineers and committing to real production sites — not just demos — means this is more than a marketing play.

The Digital Twin Composer is particularly worth watching. If it genuinely lets teams build physics-based simulations without a PhD and a six-month implementation, that changes the accessibility equation for digital twins in a meaningful way.

And the broader signal is clear: digital twins are moving from visualization tools to decision-making engines. Even if this specific partnership takes longer than promised, the direction is set.

The bottom line: This is one of the biggest industrial AI bets we've seen. The vision is compelling, the players are serious, and the early data sounds great. But manufacturing has been burned by big promises before. Watch what ships to the Xcelerator Marketplace this year — not what was said on stage in January.

👉 Read more about the Siemens-NVIDIA partnership →

Anthropic Just Dropped 11 Plugins. Wall Street Lost $285 Billion. Here's What Actually Happened.

On January 30th, Anthropic — the company behind Claude — quietly released 11 open-source plugins for its Cowork desktop tool. No launch event. No keynote. Just a GitHub repo and a blog post.

Five days later, Wall Street coined a new word: "SaaSpocalypse."

What Anthropic actually released:

Cowork is Anthropic's desktop tool that lets Claude work directly with your files, apps, and workflows — no browser required. The new plugins extend that into specialized territory: legal contract review, sales call prep, compliance checks, data analysis, customer support automation. They're open-source, customizable, and available to all paying Claude subscribers.

The key detail most people missed? These plugins are designed to be built and edited without deep technical expertise. Anthropic isn't just offering pre-built tools — they're offering a framework for building your own.

Then the market reacted:

Between February 3rd and 6th, roughly $285 billion in market value evaporated from software companies globally. Some highlights:

- Thomson Reuters: Down 16% — their worst single-day drop in company history

- LegalZoom: Down 20%

- RELX: Down 14% — worst day since 1988

- Wolters Kluwer: Down 13%

- Salesforce, ServiceNow, Adobe: Each down ~7%

- SAP: Down 33% from yearly highs

- Goldman Sachs Software Index: Down 6%

Indian IT got hammered too — Infosys dropped 7%, TCS fell 6%.

Why investors panicked:

The fear isn't about 11 plugins. It's about what they signal.

For years, companies like Salesforce, Thomson Reuters, and Adobe have charged enterprise prices for specialized workflow software. Legal research tools. CRM platforms. Document management systems. The value proposition was always: "We understand your industry. We built the workflows. Pay us $50-200/seat/month."

Now Anthropic is saying: "What if an AI agent could do 80% of that workflow for $20/month?"

Jefferies put it bluntly in their analyst note: Anthropic is no longer just supplying foundation models to other companies. They're building complete workflow solutions that compete directly with the application layer. And OpenAI launched its own "Frontier" platform the same week, treating platforms like Salesforce and Adobe as data silos to harvest rather than partners to support.

A Bain report estimated that up to 30% of tech services revenue could disappear from AI automation. Whether that's accurate or panic-driven is debatable. But the direction isn't.

The skeptic's take:

Before you assume every SaaS vendor is toast, some perspective:

Nasscom called the concerns "misplaced," arguing that enterprise value requires human context, industry knowledge, and complex workflows that plugins can't replicate overnight. Cognizant's CEO pointed out that despite three years of ChatGPT hype, actual enterprise value hasn't meaningfully shifted yet. Wedbush Securities called the sell-off an "Armageddon scenario far from reality" — enterprises won't overhaul billions in infrastructure because of a GitHub repo.

They're probably right... for now. The question isn't whether AI agents replace Salesforce tomorrow. It's whether the pricing power of specialized SaaS holds up when a general-purpose AI can handle 60-70% of the same tasks.

Why manufacturers should care:

You're a SaaS customer. Your CMMS, your ERP add-ons, your quality management system, your training platforms — most of them are SaaS products charging per-seat pricing for specialized workflows.

If AI agents can increasingly handle compliance documentation, maintenance scheduling, quality reporting, and operator training workflows, the question becomes: are you paying $150/seat/month for a workflow that a $20/month AI agent could handle?

That doesn't mean cancel your MES contract tomorrow. But it does mean:

- Watch your renewal conversations carefully. SaaS vendors feeling pricing pressure may start offering better deals — or locking you into longer contracts before the market shifts further

- Start experimenting with AI agents for low-risk workflows — report generation, documentation, training content — to understand what's actually replaceable

- Ask your vendors what their AI strategy is. If the answer is "we added a chatbot," that's not a strategy

The bottom line: Eleven open-source plugins didn't destroy $285 billion in value. The realization that foundation model companies are moving up the stack destroyed $285 billion in value. Whether the SaaSpocalypse is overblown or early innings, manufacturers choosing software tools right now should be asking harder questions about long-term value — and paying close attention to what AI agents can do for $20 that they're currently paying $200 for.

👉 Explore Anthropic's Cowork plugins on GitHub →

👉 TechCrunch: Anthropic brings agentic plugins to Cowork →

👉 Yahoo Finance: 'Get me out' — Traders dump software stocks as AI fears erupt →

👉 Shelly Palmer: The SaaSpocalypse →

Vention Just Raised $110M to Make Factory Automation Work on the First Try. Yes, Really.

If you've ever deployed a new automation cell, you know the drill: months of engineering, weeks of integration, days of debugging, and a go-live that somehow still requires three guys standing around the cell "just in case."

Vention wants to kill that entire cycle. And they just raised $110M in Series D funding to do it.

What Vention actually does:

Vention is a Montreal-based platform that combines modular hardware, cloud software, and — increasingly — AI to let manufacturers design, order, and deploy automation systems faster than traditional integrators can return a quote.

Think of it as the difference between building a custom house from scratch versus assembling one from a proven catalog of components that are designed to work together. You design your automation cell in their cloud platform, order the hardware, and assemble it on your floor. Their platform handles the motion planning, robot programming, and system configuration.

The new funding round was led by Investissement Québec, with Fidelity Investments Canada, Desjardins Capital, and notably NVentures — NVIDIA's venture capital arm — all participating. That last one matters. When NVIDIA's VC puts money into a manufacturing automation company, it's a signal that the Physical AI thesis isn't just conference talk anymore.

The "Zero-Shot Automation" pitch:

Vention's big bet is what they're calling "Zero-Shot Automation" — systems that deploy seamlessly, require no integration gymnastics, and work correctly on the first attempt.

If you just laughed, that's fair. Anyone who's commissioned a new robotic cell knows that "works on the first try" sounds like marketing fiction. But here's what they're actually building toward:

- Automated configuration tools that generate machine specs from requirements

- An AI agent that handles system design decisions

- A robotic programming copilot that writes motion programs

- Fully autonomous AI-powered robotic applications that self-configure

The idea is that the AI handles the integration work that currently requires a controls engineer spending weeks tuning parameters, writing PLC logic, and testing edge cases. Whether it actually delivers "zero-shot" in the real world remains to be seen — but even getting to "three-shot" would be a massive improvement over the current six-month integration cycle most manufacturers deal with.

The numbers that matter:

Vention isn't a startup running on promises. They crossed $100M CAD in annual recurring revenue in December 2025, serve 4,000+ factories worldwide, and count Boeing, L'Oréal, and Lockheed Martin among their customers. Total funding is now over $300M CAD. They have 330 employees and are expanding into EMEA with part of this round.

More interesting than the customer logos is how enterprises are using the platform. According to Vention, large customers are building centralized Advanced Manufacturing Teams (AMTs) that design automation cells once in the cloud platform and deploy them identically across multiple sites, countries, and production lines. Design once, deploy everywhere.

That's a fundamentally different model than the traditional approach of hiring a different integrator at every plant and ending up with seven slightly different versions of the same automation cell — none of which share spare parts.

The skeptic's take:

"Zero-Shot" is a bold claim. In practice, every factory has quirks — floor conditions, utility configurations, legacy equipment that wasn't in the CAD model. No platform eliminates all of that friction.

And Vention's modular approach works best for relatively standard automation tasks: pick-and-place, machine tending, palletizing, assembly. If you need a highly customized welding cell for a unique part geometry, you're probably still calling an integrator.

The AI copilot features are also still early. Generating robot programs from natural language descriptions is impressive in demos, but production-grade motion planning for safety-critical applications requires validation that "the AI wrote it" doesn't yet inspire in most quality departments.

Why it matters for your plant:

The real story here isn't one company's funding round. It's that the automation deployment model is shifting.

The traditional model — hire an integrator, wait 6-12 months, spend $500K-$2M, hope it works — is increasingly competing against platforms that compress that cycle to weeks and let your own team handle the deployment. Not for everything. Not yet. But for a growing range of standard automation tasks, the math is changing fast.

If your automation backlog keeps growing because you can't get integrator time or budget approval for custom cells, platforms like Vention represent an alternative worth evaluating. The question isn't whether self-service automation platforms will replace traditional integrators — it's which tasks will shift first and how fast.

The bottom line: Vention's $110M raise — with NVIDIA's VC arm at the table — is another signal that the Physical AI and self-service automation wave is real and funded. "Zero-Shot Automation" might be aspirational, but even "dramatically faster automation" would be enough to change how mid-market manufacturers think about their next cell deployment.

👉 Read about Vention's $110M Series D →

👉 Vention's official press release →

👉 BetaKit's coverage of the round →

A Word from This Week's Sponsor

-

Canary Labs has been at the forefront of industrial analytics for decades — helping manufacturers turn raw time-series data into actionable insight at scale. Known for their deep historian expertise and advanced analytics engine, Canary enables teams to understand what’s happening in their processes and why — without ripping and replacing existing infrastructure.

At ProveIt! 2025, Canary Labs demonstrated how modern analytics can sit on top of operational data to deliver real-world value fast, from root-cause analysis to predictive insights that actually get used by operations teams.

Looking ahead to 2026, Canary Labs continues to push innovation in:

High-performance industrial data historians

Advanced analytics and pattern recognition

Seamless integration with OT, IT, and UNS architectures

Scalable analytics for quality, reliability, and performance improvementCanary Labs isn’t just storing data — they’re helping manufacturers understand it, trust it, and act on it.

We’re proud to feature Canary Labs as a newsletter sponsor and as a sponsor of ProveIt! 2026.

Learn more about Canary here: www.canarylabs.com/

Try it Free here: Free Trial

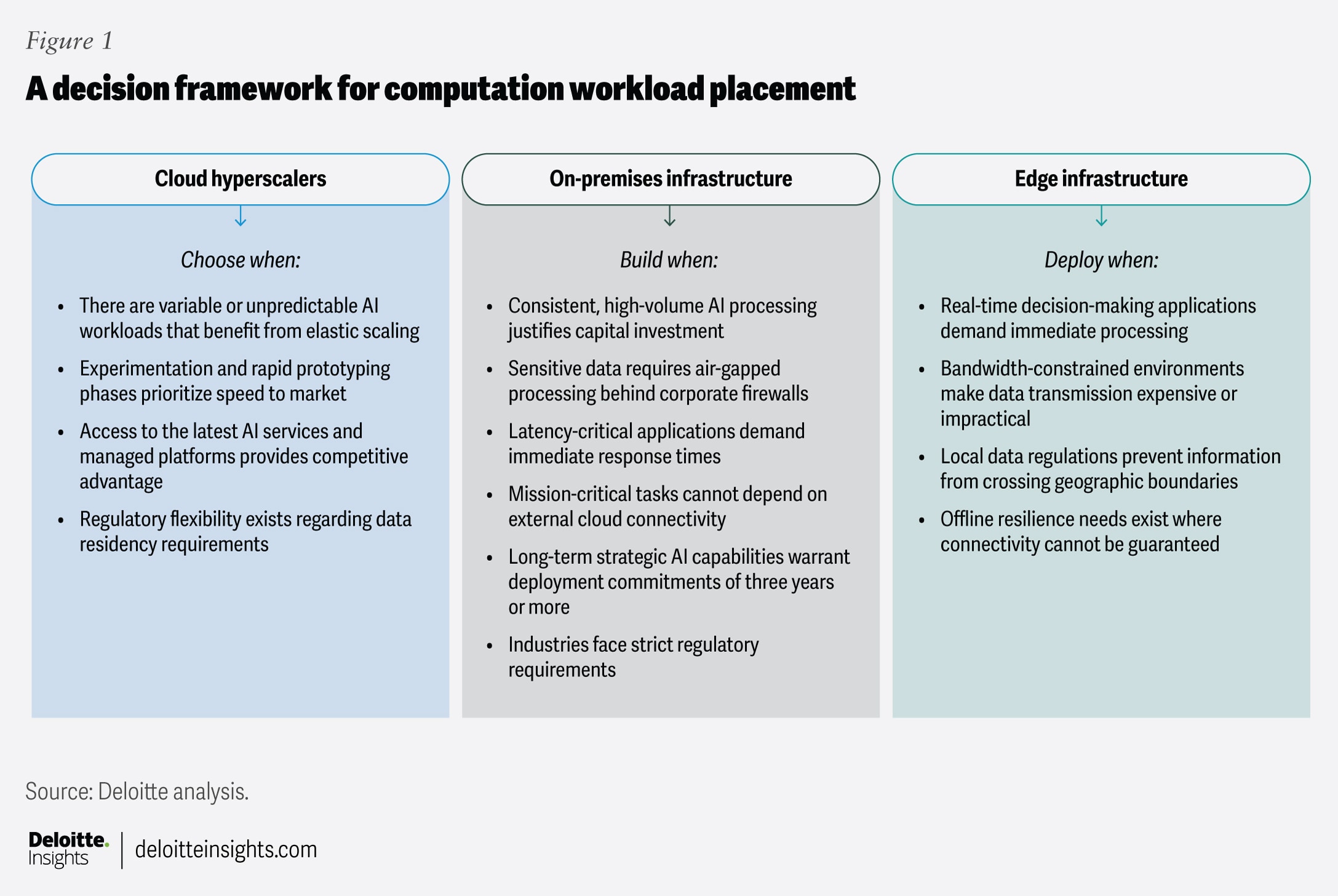

Your Factory Runs at 99% Uptime. Why Would You Risk That for a Cloud Migration?

While every headline screams about cloud AI, a growing number of manufacturers are doing something radical: they're keeping their AI on-premise. And it's not because they're behind the times — it's because they understand something the cloud vendors don't want to talk about.

A recent Quality Digest piece put it bluntly: small and midsize manufacturers — the ones that make up the backbone of U.S. production — aren't running greenfield tech stacks or cloud-native environments. They're running legacy databases, custom ERP layers, proprietary scripts, and decades-old equipment that's been iterated and optimized over years. Their systems run at 98-99% uptime. And nobody wants to risk that for a cloud migration.

Here's the reality nobody puts in the vendor pitch deck:

The cloud-first narrative assumes you're starting fresh. You're not. You've got a MES that's been customized over 15 years. An ERP system that's been bolted onto three different production lines. Historians full of process data that only two people in the plant understand. And a 24/7 operation where one unexpected software conflict can shut down a line that produces millions of dollars in product per week.

"Rip and replace" isn't modernization for most manufacturers — it's a risk to business continuity.

What's actually working instead:

The manufacturers seeing results in 2026 are deploying lightweight, on-premise AI agents that integrate directly with existing systems rather than replacing them. Think of it as adding a smart layer on top of what you already have — not tearing out the foundation.

These on-prem AI agents can analyze logs in real time, spot production anomalies that might go unnoticed, automate repetitive investigation work, and give frontline teams better information for faster decisions — all without changing a single machine or forcing workers onto entirely new interfaces.

The approach is gaining momentum for three reasons:

- Downtime risk is real. Cloud migrations introduce variables your production schedule can't absorb. On-prem AI slots into what already works.

- Data sovereignty matters. Your process recipes, quality parameters, and production optimization data are your competitive advantage. Sending that to the cloud means trusting a third party with your secret sauce.

- Latency kills. When a robotic cell needs a decision in milliseconds, waiting for a round trip to AWS isn't an option. Edge and on-prem inference happens where the data lives.

The broader industry is catching on:

This isn't just a manufacturing opinion piece. Dell's 2026 edge AI predictions noted that running models locally will become the norm for organizations needing stable foundations and insulation from external disruptions. IBM's 2026 tech outlook highlighted that 93% of executives say factoring AI sovereignty into business strategy is now mandatory. Gartner predicts that by 2027, organizations will use small, task-specific AI models three times more than general-purpose large language models. And Deloitte's infrastructure report explicitly called out data sovereignty, latency sensitivity, and resilience requirements as primary drivers pushing enterprises back toward on-premise compute.

The edge AI market is projected to reach $118.69 billion by 2033, growing at 21.7% annually. That's not "anti-cloud" money — it's "right tool for the right job" money.

What this means for your plant:

The question isn't "when will we move everything to the cloud?" That was 2020's question. The 2026 question is: "How do we bring AI to the systems we already trust?"

Here's where to start:

- Identify one high-value, low-risk process for an on-prem AI pilot. Predictive maintenance on your most critical asset is the classic starting point — it's high-value data that benefits from local processing and doesn't require cloud scale.

- Audit your data sovereignty posture. Know what process data you're comfortable sending to the cloud and what stays on-site. Make this a deliberate architectural decision, not an afterthought.

- Evaluate hybrid architectures. The best approach for most plants isn't pure on-prem or pure cloud — it's edge AI for real-time decisions at the machine level, with cloud for long-term trend analysis and cross-facility benchmarking. Companies running hybrid report 40% faster response times for critical operations while cutting cloud costs by 30-50%.

- Ask your vendors hard questions. If your CMMS, quality, or analytics vendor requires cloud connectivity to function, understand what happens when that connection drops at 2 AM on a Saturday during a production run.

The bottom line: The most successful manufacturers in 2026 won't be the ones with the flashiest AI tools. They'll be the ones who embed AI in a way that strengthens the systems, workflows, and institutional knowledge that already make their operations productive. Cloud AI has its place. But for the plant floor, the smartest AI might be the one that never leaves the building.

👉 Read "The Next Wave of Industry 4.0" on Quality Digest →

👉 Dell's Edge AI Predictions for 2026 →

👉 Deloitte: The AI Infrastructure Reckoning →

Byte-Sized Brilliance

There Are More Possible Combinations of a Rubik's Cube Than Seconds Since the Big Bang

A standard 3x3 Rubik's Cube has 43,252,003,274,489,856,000 possible configurations. That's 43 quintillion. If you turned the cube to a new position every second since the Big Bang — 13.8 billion years ago — you'd have covered less than 0.000000003% of the possibilities.

And yet, every single one of those 43 quintillion states can be solved in 20 moves or fewer. Mathematicians call this "God's Number," and it was proven in 2010 using 35 CPU-years of computing time donated by Google.

Here's why that's a manufacturing story: the Rubik's Cube was invented in 1974 by Ernő Rubik, a Hungarian architecture professor who originally built it as a teaching tool for spatial relationships. He didn't realize he'd created a puzzle until he scrambled it and spent a month trying to solve his own invention.

Today, over 500 million cubes have been sold worldwide, making it the best-selling single product in toy history. The current world record for solving one? 3.13 seconds. A robot built by MIT students did it in 0.38 seconds.

The lesson? Sometimes the most complex-looking systems have elegant solutions hiding inside them. You just need the right algorithm — and maybe a robot that moves faster than a human can blink.

|

|

|

|

|

|

Responses