4 Things Industry 4.0 12/8/2025

Presented by

Happy December 8th, Industry 4.0!

The lights are going up, the coffee is getting stronger, and the sprint to year-end is officially underway. While most people are thinking about holiday shopping and ugly sweaters, the tech world is busy shipping some of its biggest updates of the year. Google rolled out new ways to build AI agents without writing a line of code, AWS redefined what “serverless” means (again), and NVIDIA just reminded everyone that Moore’s Law may be slowing — but GPU scaling definitely isn’t.

Meanwhile, security teams are scrambling to patch React2Shell before attackers turn it into their own holiday tradition, and hyperscale infrastructure is smashing past limits we thought would hold for years. It’s a fitting end to 2025: innovation accelerating, foundations shifting, and the tools we rely on getting smarter by the week.

So grab a warm drink, find a quiet corner away from the blinking lights, and let’s dig into what’s shaping the factories, cloud platforms, and AI systems driving the next industrial era.

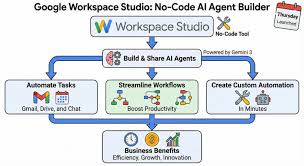

Google Workspace Studio — Your New AI Agent Workshop for Everyday Work

Google just launched Workspace Studio, a no-code platform that lets anyone build, manage, and share AI agents inside Google Workspace — no coding required.

What’s changed:

- Users can now describe what they want automated in plain English — like “every Friday, email my team the sales dashboard” — and Workspace Studio (powered by Gemini 3) builds the agent for you.

- These agents go beyond simple rule-based automation. They reason, adapt, and carry context across apps (Gmail, Drive, Sheets, Chat). That means tasks like triaging support tickets, drafting weekly reports, or prioritizing emails can be handed off to AI without writing a single line of script.

- For manufacturing and Industry 4.0 users, this could mean less time wrestling spreadsheets or manual compliance logs — and more time focused on actual plant-floor decisions or integrating AI-powered analytics pipelines.

Why this matters to you:

Many manufacturers and integrators juggle email chains, approvals, change requests, vendor communications, SOP updates, and more. Workspace Studio gives you a quick way to bake AI automation directly into those workflows — without waiting for IT, hiring developers, or writing custom integrations. It can flatten friction between engineers, operations, and admin teams, making factories and control rooms a little smarter with almost no friction.

👉 Read the full announcement: https://workspaceupdates.googleblog.com/2025/12/workspace-studio.html

AWS Lambda Managed Instances — Serverless Simplicity Meets EC2 Power

AWS Lambda just got a major upgrade: the new Lambda Managed Instances option lets you run Lambda functions on EC2-based servers — including the latest Graviton4, high-network instances, or other specialized compute — while still keeping the zero-ops, serverless development model.

That means for predictable, high-throughput workloads (think IIoT data ingestion, steady analytics pipelines, batch jobs, or edge-cloud bridging for manufacturing systems), you get the flexibility of EC2 — specific CPU architecture, reserved-instance or savings-plan pricing, high memory/network throughput — plus Lambda’s familiar event-driven interface and managed scaling.

For manufacturers or industrial cloud deployments, this could make a big difference: you no longer have to re-architect functions into containers or manage servers manually just to get performance or cost efficiency. Instead, you get a hybrid model — serverless development with compute-class control — ideal for data-heavy, latency-sensitive, or consistently busy automation workloads.

👉 Read more about Lambda Managed Instances: https://aws.amazon.com/blogs/aws/introducing-aws-lambda-managed-instances-serverless-simplicity-with-ec2-flexibility/

NVIDIA’s MoE Breakthrough — 10× Faster AI Inference with the GB200 NVL72

NVIDIA just dropped new benchmark numbers showing its rack-scale “GB200 NVL72” servers massively speed up modern “Mixture-of-Experts” (MoE) AI models — delivering up to 10× the inference performance compared with prior-generation systems.

Why this matters:

- MoE models only activate a subset of their “experts” per request (rather than firing up every parameter) — giving big efficiency gains — but until now, scaling them was constrained by memory, interconnect latency, and coordination overhead.

- The GB200 NVL72 rack ties together 72 of NVIDIA’s latest “Blackwell” GPUs with high-bandwidth interconnect and 30 TB shared memory, handling expert-parallel workloads as a single unified accelerator. That removes classic bottlenecks for throughput, latency, and memory.

What it enables for Industry 4.0 & AI-powered manufacturing:

- AI-driven applications such as predictive maintenance, quality inspection, real-time anomaly detection or digital twins often need to run inference on large models across many data streams. With 10× speed-ups, they become far more practical and cost-efficient.

- Cloud providers and AI infrastructure vendors are already rolling out GB200-powered offerings — meaning manufacturing, energy, and industrial compute users can access this performance without building custom hardware.

- As MoE becomes the default architecture for frontier LLMs and multimodal agents, these hardware gains may help AI move from experimental to ubiquitous in mission-critical operations where latency, scale and cost matter.

👉 Read the full article on the performance leap 🍃

A Word from This Week's Sponsor

HiveMQ continues to lead the industry in reliable, scalable, secure industrial data movement — enabling teams to connect OT and IT systems with confidence. At ProveIt! 2025, they stole the show with the launch of HiveMQ Pulse, an observability and intelligence layer designed specifically for MQTT-based architectures. It was the biggest announcement of the conference — and Pulse is already proving to be a transformative tool for anyone building real-time, UNS-driven systems.

In 2026, folkss will get to see Pulse in full effect, along with HiveMQ’s continued innovations in:

-

High-availability, enterprise MQTT clusters

-

Real-time UNS data flow and governance

-

Deep visibility into client behavior, topic performance, and data reliability

-

AI-assisted operational insights

HiveMQ is not just a broker — it’s the data infrastructure layer enabling scalable digital transformation, advanced analytics, and the next generation of industrial AI.

We’re proud to feature HiveMQ as our newsletter sponsor and as a Gold Sponsor for ProveIt! 2026.

👉 Learn more about HiveMQ at HiveMQ.com

Or Get Started for Free: https://www.hivemq.com/company/get-hivemq/

React2Shell Exposes Critical RCE Risk — If You Use React 19 Server Components, Patch Now

A new zero-day vulnerability dubbed React2Shell (CVE-2025-55182) has been disclosed in React Server Components and allied frameworks (like Next.js), allowing unauthenticated remote-code execution via a single crafted HTTP request.

What it does:

- The vulnerability stems from unsafe deserialization of data sent to React Server Function endpoints — in other words, specially crafted payloads can trick the server into executing attacker-controlled code.

- It affects React-Server-DOM packages (webpack, parcel, turbopack) version 19.0, 19.1.0, 19.1.1, and 19.2.0 as well as Next.js App Router deployments under many 15.x / 16.x versions.

Why it matters for industrial-scale and digital-transformation projects:

Many modern manufacturing, IIoT, and enterprise web-portal systems rely on React + Next.js to deliver dashboards, operator interfaces, quality tracking, and more. If one of those applications is exposed to the internet (or even internal network), this bug can let an attacker “pull the plug” — install malware, exfiltrate data, or move laterally inside the infrastructure — with no credentials required. The widespread adoption of React and default vulnerability in RSC workflows makes this an especially serious threat.

What you should do right now:

- Upgrade React Server Components packages to fixed versions: 19.0.1, 19.1.2, or 19.2.1 (also ensure related packages like react-server-dom-parcel/webpack/turbopack are patched).

- For Next.js: update to versions 15.0.5-16.0.7 or newer.

- If patching will take time, deploy a Web Application Firewall (WAF) or runtime monitoring rule to block suspicious payloads targeting RSC endpoints — threat-researchers already published detection rules and publicly available PoCs.

👉 Read the Sysdig deep dive on detection & mitigation

Learning Lens

Advanced MCP + Agent to Agent: The Workshop You've Been Asking For

If you've been building with MCP and wondering how to take it to the next level—multi-server architectures, agent orchestration, and distributed intelligence—this one's for you.

On December 16-17, Walker Reynolds is running a live, two-day workshop that goes deep on Advanced MCP and Agent2Agent (A2A) protocols. This isn't theory—it's hands-on implementation of the patterns that enable collaborative AI systems in manufacturing.

Here's what you'll build:

- Multi-server MCP architectures with server registration, authentication, and message routing

- Agent2Agent communication protocols where specialized AI agents collaborate to solve complex industrial problems

- Production-ready patterns for orchestrating distributed intelligence across factory systems

The Format:

- Day 1: Advanced MCP multi-server architectures (December 16, 9am-1pm CDT)

- Day 2: Agent2Agent collaborative intelligence (December 17, 9am-1pm CDT)

- Live follow-along coding + full recording access for all registrants

Early Bird Pricing: $375 through November 14 (regular $750)

Whether you're architecting UNS environments, building agentic AI systems, or just tired of single-server MCP limitations, this workshop gives you the architecture patterns and implementation playbook to scale.

Why it matters: MCP is rapidly becoming the backbone for connecting AI agents to industrial data. Understanding how to orchestrate multiple servers and enable agent-to-agent collaboration isn't just a nice-to-have—it's the foundation for autonomous factory operations.

Byte-Sized Brilliance

December Is the Only Month Where Your Uptime Depends on Holiday Lights

Believe it or not, power companies report a measurable bump in local brownouts every December… thanks to decorative holiday light displays. And yes — that means some on-prem servers, edge devices, and dusty factory PCs have historically gone down because someone across the street went full-Clark-Griswold with 40,000 LEDs.

It’s the only time of year when observability dashboards and neighborhood light shows have something in common: both can take the grid to its limits.

|

|

|

|

|

|

Responses